Last week, I was on holiday. That is, I took 5 days off work in which I could have relaxed, read novels, watched TV, played lots of games, gone for long walks on the countryside and (in happier, less Covid-19 times) have spend time away from home, wrapped up warm enjoying a view of waves crashing onto a beach. Instead, I spent those 5 days – and fair amount of the 4 weekend days that bracketed them – squirreled away in my office, sitting at my computer. Which is rather similar to what I would have been doing if I hadn’t taken the time off.

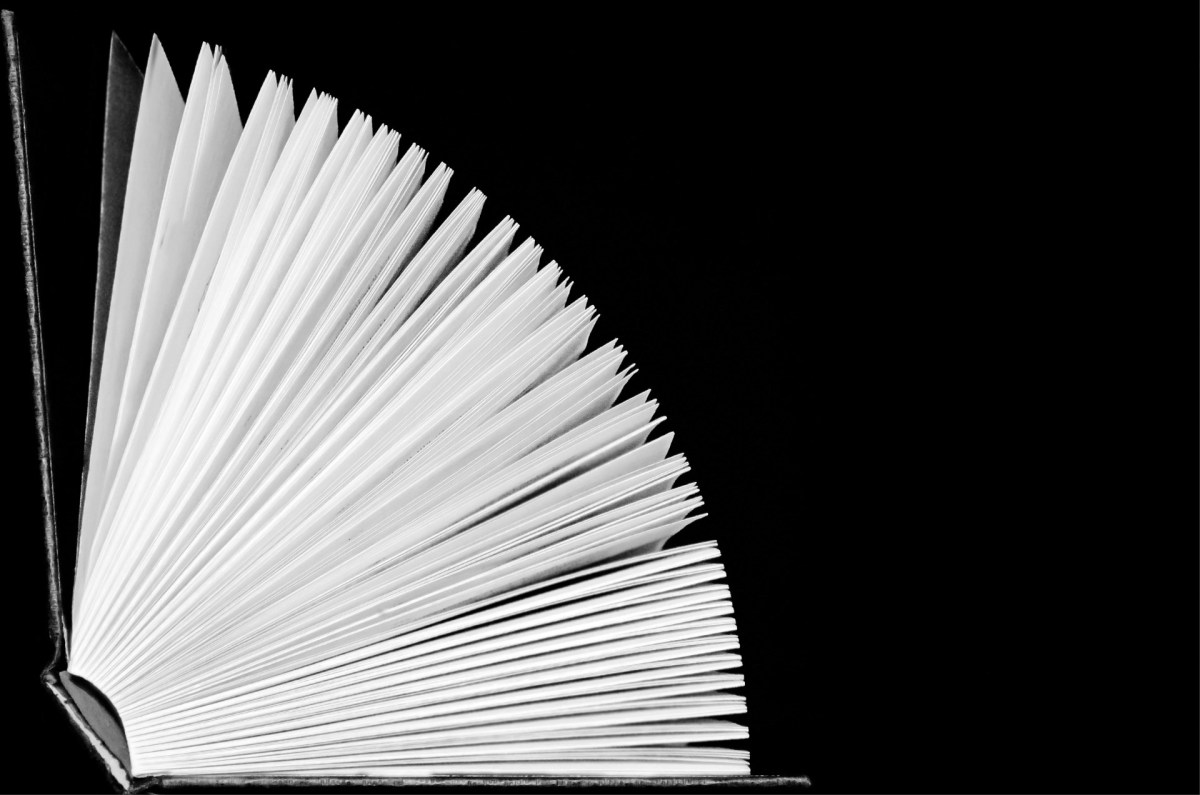

The reason I did this is that I’m writing a book at the moment. Last week I managed to write well over 12,500 words of it, taking me to more than 80% of my projected word count (over 82% if you could bibliographic material), so the time felt well-spent. But why am I doing this in the first place?

I’m doing it at one level because I have a contract to do it. Around July-August last year (2019), I sent emails to a few (3-4) publishers pitching the idea for a book. I included a detailed Table of Contents, evidence that I’ve written before (including some links to this blog), and a bit about me, including a link to my LinkedIn profile. One of the replies I had (within 24 hours, to my amazement) was from Jim Minatel, a commissioning editor from Wiley Technology. Over a number of weeks, I talked to him and editors from other publishers, finally signing a contract with Wiley for a book on Trust in Computing and the Cloud, with a planned word count of 125,000 words. I’m not going to provide details of the contract, but I can say that :

- I don’t expect it to make me rich (which was never the point anyway);

- it has options for another book or books (I must be insane even to be considering the idea, but Jim and I have already have some preliminary conversations);

- there are clauses in it about film/movie rights (nobody, but nobody, is ever going to want to make or watch a film about this book: fascinating as it may be, Tom Clancy or John Grisham it is not).

This kind of explains why I spent last week closeted in my office, tapping away on a computer keyboard, but why did I get in touch with these publishers in the first place, pitching the idea of a book that is consuming a fair number of my non-work waking hours?

The basic reason is that I got cross. In fact, I got so annoyed about something that I went to a couple of people – my boss was one, a good friend with a publishing background was another – and announced that I planned to write a book. I was half hoping that they would dissuade me, but they both enthusiastically endorsed the idea, which meant that I had, at least in my head, now committed to doing it. This was in early May 2019, and it took me a couple of months to gather my thoughts, put together some materials and a find few candidate publishers before actually pitching the book to them and ending up with a contract.

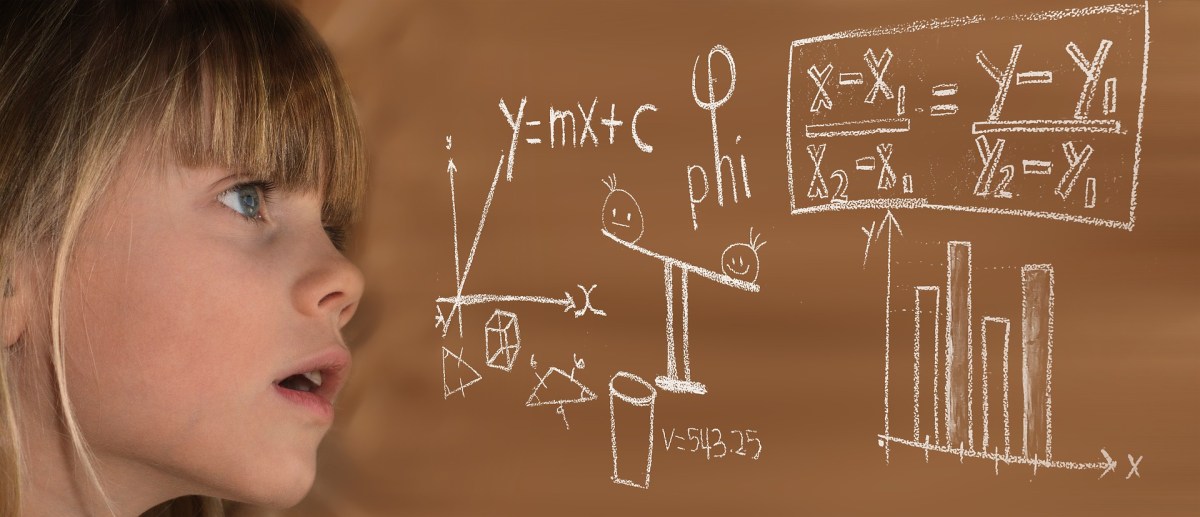

But what actually got me to a position where I was cross enough about something to pitch an idea which would take up so much of my time and energy? The answer? I was tipped over the edge by hearing someone speak about trust in a way that made it clear that they had no idea what they were talking about. It was a session at a conference – I can remember the conference, but I can’t remember the session or the speaker – where the subject was security: IT security. This is my field, so that’s good. What wasn’t good was what the speaker said when speaking about trust: it didn’t hold together, it wasn’t consistent with how I felt about trust, and I didn’t feel that it was broadly applicable to computing or the Cloud.

This made me cross. And then I thought: why is there no consensus on what we mean by “trust”? Why do people talk about “zero trust” without really knowing what trust actually means? Why is there no literature on this subject that I’ve been thinking about for nearly 20 years? Why do I keep having conversations with people where they agree that trust is really interesting, but we discover that we don’t have a common starting point as there’s no theoretical underpinning describing exactly we’re discussing? Why are people deploying important workloads and designing business-critical systems without a good framework around what seems like a fundamental concept that everybody is always eager to include in their architectures? Why are we pushing forward with Confidential Computing when people don’t even understand the impact of the trust relationships which underlie it?

And I realised that I could keep asking these questions, and keep having these increasingly frustrating conversations, whilst waiting for somebody to publish some sort of definitive meisterwerk on the subject, or I could just admit that no-one was going to do it, and that I might get on and write something, even if it was never going to be the perfect treatment of what is, after all, a very complex subject.

And so that’s what I decided to do. I thought about what interests me in the field of trust in the realms of computing and the Cloud, about what I’ve heard people talk very badly about, and about what I’d had interesting conversations, decided that I might have something to say about all of those, and then put together a book structure. Wiley liked the idea, and asked me to flesh it out and then write something. It turns out that there’s loads of literature around human-to-human and human-to-organisational trust, and also on human-to-computer system trust, but very little on how computers can trust each other. Given how many organisations run much of their business in the Cloud these days, and the complex trust relationships that exist there, I wanted to write something about how to manage and understand these.

These are topics I’ve thought about (and, increasingly, written about) for around 20 years, since I did some research into the possibility of a PhD (which never materialised) in a related topic. They’ve stayed with me since, and I was involved in some theoretical and standards-based work around trust while involved with the ETSI NFV group nearly 10 years ago. I’m not pretending that I’m perfectly qualified to discuss this topic, but then again, I’m not sure that anybody is, and I feel that putting out some sort of book on this topic makes sense, if only to get the conversation started, and to give people an opportunity to converse with a shared language. The book starts with some theoretical underpinnings, looks at some of the technologies, what their implications are, the place of open source, the commercial and organisational impacts, and then suggests some future and frameworks. I hope to have the manuscript (well, typescript) completed and with Wiley by mid-spring (Northern Hemisphere) 2021: I don’t know when it’s actually likely to appear in print.

I hope people find it interesting, and that it acts as a catalyst for further discussion. I don’t expect it to be the last word on the subject – in fact I hope it’s not – but I do hope that it forces more people to realise that trust is really important in our world of computers, security and risk, and currently ill-understood. And if you happen to be a successful producer of Hollywood blockbusters, then I’m available to talk. Just as soon as I get these last couple of chapters submitted. ..