Just before Christmas – about 6 months ago, it feels like – I published a blog post to announce that I’d finished writing my book: Trust in Computing and the Cloud. I’ve spent much of the time since then in shock that I managed it – and feeling smug that I delivered the text to Wiley some 4-5 months before my deadline. As well as the core text of the book, I’ve created diagrams (which I expect to be redrawn by someone with actual skills), compiled a bibliography, put together an introduction, written a dedication and rustled up a set of acknowledgements. I even added a playlist of some of the tracks to which I’ve listened while writing it all. The final piece of text that the publisher is expecting is, I believe, a biography – I’m waiting to hear what they’d like it to look like.

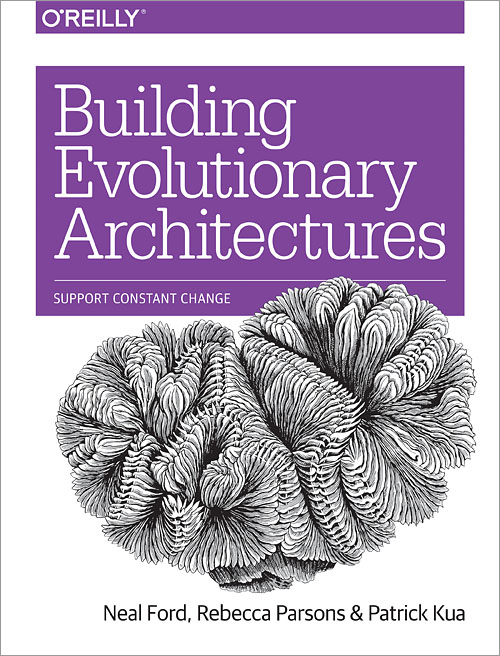

All that said, I’m aware that the process is far from over: there is going to be lots of editing to be done, from checking my writing to correcting glaring technical errors. There’s an index to be created (thankfully this is not my job – it’s a surprisingly complex task best carried out those with skill and experience in the task), renaming of some chapters and sections, decisions on design issues like cover design (I hope to have some input, but don’t expect to be the final arbiter – I know my limits[1]). And then there’s the actual production process – in which I don’t expect to be particularly involved – followed by publicity and, well, selling copies. After which comes the inevitable fame, fortune and beach house in Malibu[2]. So, there’s lots more to do: I also expect to create a website to go with the book – I’ll work with my publisher on this closer to the time.

Having spent over a year writing a book (and having written a few fiction works which nobody seemed that interested in taking up), I’m still not entirely sure how I managed it, so instead of doing the obvious “how to write a book” article, I thought I’d provide an alternative, which I feel fairly well qualified to produce: how not to write a book. I’m going to assume that, for whatever reason, you are expected to write a book, but that you want to make sure that you avoid doing so, or, if you have to do it, that you’ll make the worst fist of it possible: a worthy goal. If you’re in the unenviable position of having to write a book: read this.

1. Avoid passion

If you don’t care about your subject, you’re on good ground. You’ll have little incentive to get your head into the right space for writing, because well, meh. If you’re not passionate about the subject, then actually buckling down and writing the text of the book probably won’t happen, and if, somehow, a book does get written, then it’s likely that any readers who pick it up will fast realise that the turgid, disinterested style[3] you have adopted reflects your ennui with the topic and won’t get much further than the first few pages. Your publisher won’t ask you to produce a second edition: you’re safe.

2. Don’t tell your family

I mean, they’ll probably notice anyway, but don’t tell them before you start, and certainly don’t attempt to get their support and understanding. Failing to write a book is going to be much easier if your nearest and dearest barge into your workspace demanding that you perform tasks like washing up, tidying, checking their homework, going shopping, fixing the Internet or “speaking to the children about their behaviour because I’ve had enough of the little darlings and if you don’t come out of your office right now and take over some of the childcare so that I can have that gin I’ve been promising myself, then I’m not going to be responsible for my actions, so help me.”[4]

3. Assume you know everything already

There’s a good chance that the book you’re writing is on a topic about which you know a fair amount. If this is the case, and you’re a bit of an expert, then there’s a danger that you’ll realise that you don’t know everything about the subject: there’s a famous theory[5] that those who are inexpert think they know more than they do, whereas those who are expert may actually believe they are less expert than they are. Going by this theory, if you don’t realise that you’re inexpert, then you’re sorted, and won’t try to find more information, but if you’re in the unhappy position of actually knowing what you’re up to, you will need to make an effort to avoid referencing other material, reading around the subject or similar. Just put down what you think about the issue, and assume that your aura of authority and the fact that your words are actually in print will be enough to convince your readers (should you get any).

4. Backups are for wimps

I usually find that when I forget to make a backup of a work and it gets lost through my incompetence, power cuts, cat keyboard interventions and the like, it comes out better when I rewrite it. For this reason, it’s best to avoid taking backups of your book as you produce it. My book came to almost exactly 125,000 words, and if I type at around 80wpm, that’s only 1,500[6] or 60(ish) days of writing. And it’ll be better second time around (see above!), so everybody wins.

5. Write for everyone

Your book is going to be a work of amazing scholarship, but accessible to humanities (arts) and science graduates, school children, liberals, conservatives, an easy read of great gravitas. Even if you’re not passionate about the subject (see 1), then your publisher is keen enough on it to have agreed to publish your book, so there must be a market – and the wider the market, the more they can sell! For that reason, you clearly want to ensure that you don’t try to write for particular audience (lectience?), but change your style chapter by chapter – or, even better, section by section or paragraph by paragraph.

6. Ignore deadlines

Douglas Adams said it best: “I love deadlines. I love the whooshing noise they make as they go by.” Your publisher has deadlines to keep themselves happy, not you. Write when you feel like it – your work is so good (see 5) that it’ll stay relevant whenever it finally gets published. Don’t give in to the tyranny of deadlines – even if you agreed to them previously. You’ll end up missing them anyway as you rewrite the entirety of the book when you lose the text and have no backup (see 4).

7. Expect no further involvement after completion

Once you’re written the book, you’re done, right? You might tell a couple of friends or colleagues, but if you do any publicity for your publisher, or post anything on social media, you’re in danger of it becoming a success and having to produce a second edition (see 1). In fact, you need to put your foot down before you even get to that stage. Once you’ve sent your final text to the publisher, avoid further contact. Your editor will only want you to “revise” or “check” material. This is a waste of your time: you know that what you produced was perfect first time round, so why bother with anything further? Your job was authoring, not editing, revising or checking.

(I should apologise to everyone at Wiley for this post, and in particular the team with whom I’ve been working. You can rest assured that none[7] of these apply to me – or you.)

1 – my wife and family would dispute this. How about “I know some of my limits”?

2 – maybe not, if only because I associate Malibu with a certain rum-based liqueur and ill-advised attempts to appear sophisticated at parties in my youth.

3 – this is not to suggest that authors who are interested in their book’s subject don’t sometimes write in a turgid, disinterested style. I just hope that I’ve managed to avoid it.

4- disclaimer: getting their support doesn’t mean that you won’t have to perform any of these tasks, just that there may be a little more scope for negotiation. For the couple of weeks or so, at least.

5 – I say it’s famous, but I can’t be bothered to look it up or reference it, because I assume that I know enough about the topic already. See? It’s easy when you know.

6 – it’s also worth avoiding accurate figures in technical work: just round in whichever direction you prefer.

7 – well, probably none. Or not all of them, anyway.