If there’s one difference that you can use to spot someone who takes security seriously, it’s this: they don’t make absolute statements about security. I’m going to be a bit contentious here, and I’m sorry if it upsets some people who do take security seriously, but I’m of the very strong opinion that we should never, ever say that something is “completely secure”, “hack-proof” or even just “secured”. I wrote a few weeks ago about lazy journalism, but it pains me even more to see or hear people who really should know better using such absolutes. There is no “secure”, and I’d love to think that one day I can stop having to say this, but it comes up again and again.

We, as a community, need to be careful about the words and phrases that we use, because it’s difficult enough to educate the rest of the world about what we do without allowing non-practitioners to believe that we (or they) can take a system or component and make it so safe that it cannot be compromised or go wrong. There are two particular bug-bears that are getting to me at the moment – and that’s before I even start on the one which rules them all, “zero-trust”, which makes my skin crawl and my hackles rise whenever I hear it used[1] – and they are (as you may have already guessed from the title of this article):

- quantum-proof

- tamper-proof

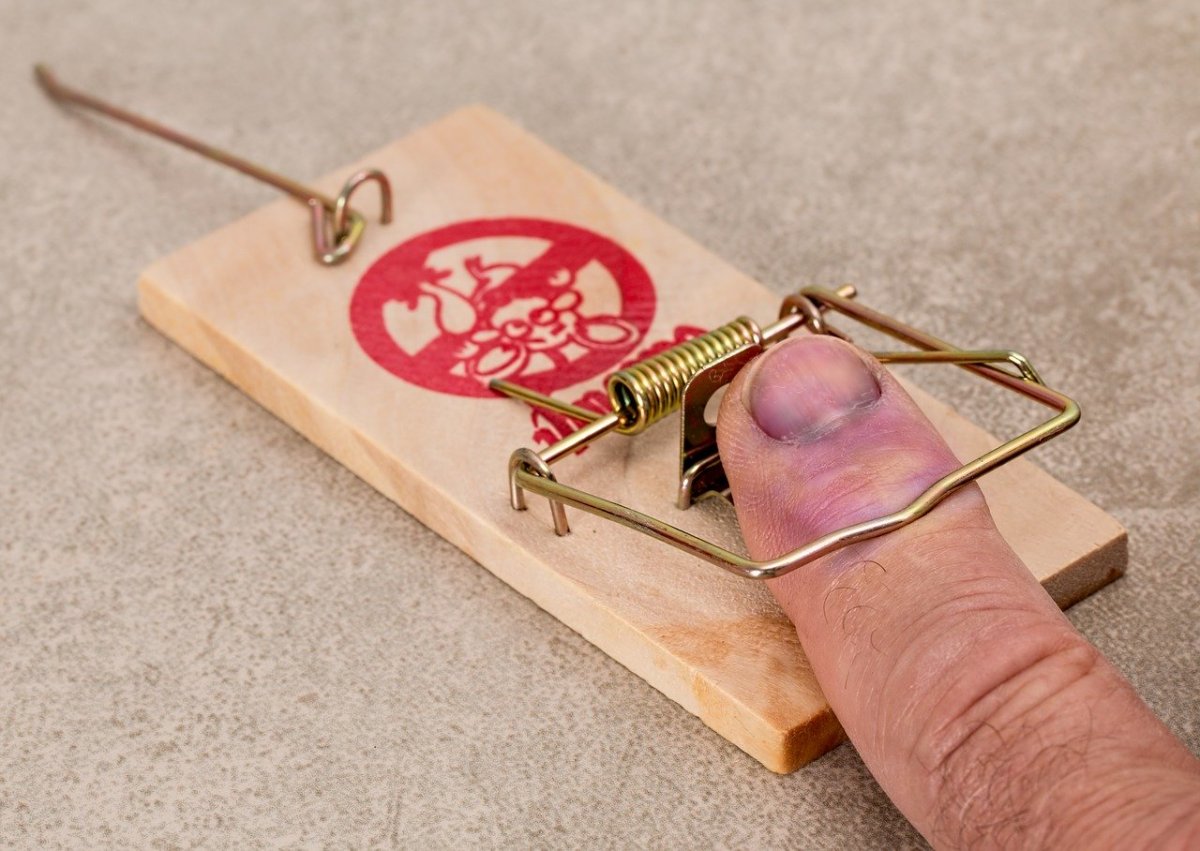

I’ll start with the latter, because it’s more clear cut (and easier to explain). Some systems – typically hardware systems – are deployed in environments where bad people might mess with them. This, in the trade, is called “tampering”, and it has a slightly different usage from the normal meaning, in that it tends to imply that the damage done to a system or component was done with the intention that the damage didn’t necessarily stop its normal operation, but did alter it in such a way that the attacker could gain some advantage (often, but not always, snooping on activities being performed). This may have been the intention, but it may be that the damage did actually stop or at least effect normal operation, whether or not the attacker gained the advantage they were attempting. The problem with saying that any system is tamper-proof is that it clearly isn’t, particularly if you accept the second part of the definition, but even, possibly if you don’t. And it’s pretty much impossible to be sure, for the same reason that the adage that “any fool can create a cryptographic protocol that he/she can’t break” is true: you can’t assess the skills and abilities of all future attackers of your system. The best you can do is make it tamper-evident: put such controls in place that it should be clear if someone tries to tamper with the system[3].

“Quantum-safe” is another such phrase. It refers to cryptographic protocols or primitives which are designed to be resistant to attacks by quantum computers. The phrase “quantum-proof” is also used, and the problem with both of these terms is that, since nobody has yet completed a quantum computer of sufficient complexity even to be try, we can’t be sure. Even once they do, we probably won’t be sure, as people will probably come up with new and improved ways of using them to attack the protocols and primitives we’ve been describing. And what’s annoying is that the key to what we should be saying is actually in the description I gave: they are meant to be resistant to such attacks. “Quantum-resistant” is a much more descriptive and accurate phrase[5], so why not use it?

The simple answer to that question, and to the question of why people use phrases like “tamper-proof” and “secure” is that it makes better marketing copy. Ill-informed customers are more likely to buy something which is “safe” or which is “proof” against something, rather than evidencing it, or being resistant to it. Well, our part of our jobs as security professionals is to try to educate those customers, and make them less ill-informed[6]. Let’s make security “marketing-proof”. Or … maybe not.

1 – so much so that I’m actually writing a book at it[2].

2 – not just the concept of “zero-trust”, but about trust in general.

3 – sometimes, the tamper-evidence is actually intentionally destroying the capabilities a system so that you can be pretty sure that the attacker wasn’t able to make it do things it wasn’t supposed to[4].

4 – which is pretty cool, though it does mean that you can’t make it do the things it was supposed to either, of course.

5 – well, I’m assuming that most of such mechanisms are resistant, of course…

6 – I fully accept that “better-informed” would be better choice of phrase here.